AI Content Watermarking Rules India: In the digital era, the old saying “seeing is believing” no longer holds true. The storm of Artificial Intelligence (AI) has taken technology to new heights, but challenges like Deepfake and Fake AI Videos have raised serious concerns in society.

Realizing this growing threat, the Government of India has now shifted into action mode. The Ministry of Electronics and IT (MeitY) has prepared a strict draft policy called AI Content Watermarking Rules India. Its main goal is to sharpen the distinction between truth and falsehood in the digital world. Let’s understand in detail on 1Tak what these new rules mean, how global laws compare, and how they aim to make your digital life safer.

What Is the Government’s New ‘AI Watermark’ Plan?

Under the India AI Mission, a major transformation is underway. According to senior officials and India AI Mission CEO Abhishek Singh, the government is bringing new rules that will help instantly identify any AI-generated content.

In simple terms — just like you identify the purity of gold by checking its hallmark, soon every online content piece will have a “digital watermark” or label that reveals whether it was created by a human or a machine.

Key Points of the Draft:

- Mandatory labeling: Any video, image, or text created using AI tools (like Sora, Midjourney, or ChatGPT) must clearly carry a disclaimer.

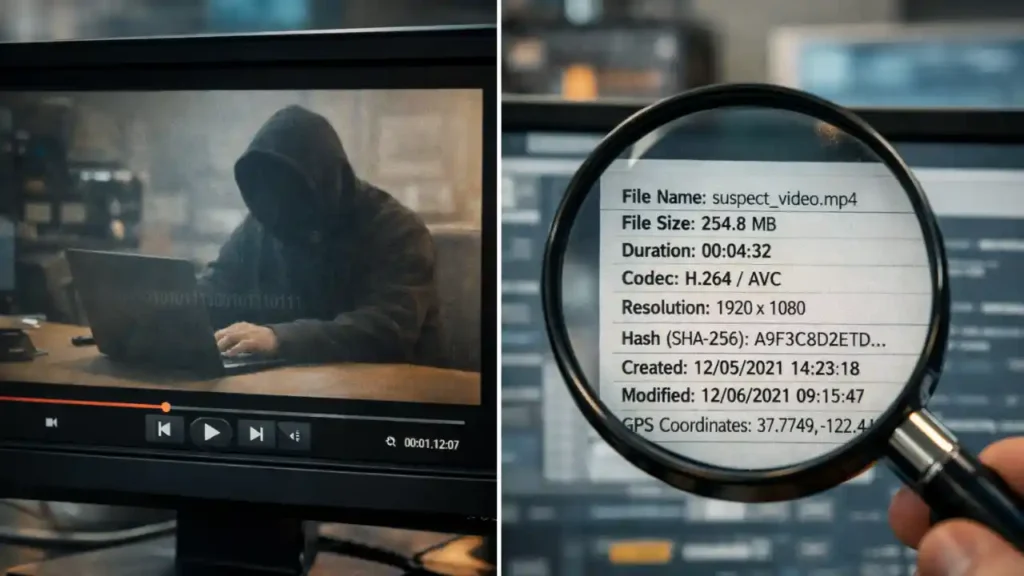

- Metadata embedding: Beyond a visible label, the file will also contain hidden, tamper-proof data within its code (metadata).

- Accountability enforcement: Social media platforms will be required to automatically detect and remove Deepfake content that lacks this label.

Why Are Strict Rules Needed?

In recent years, the demand for AI Content Watermarking Rules India has been rising fast. After deepfake videos of celebrities like Rashmika Mandanna, Alia Bhatt, and even Prime Minister Modi went viral, it became clear that this technology is no longer limited to entertainment.

Cybersecurity experts warn that if AI remains uncontrolled, it could harm both democracy and social order. Deepfake videos are now being used not only for experiments but also to influence elections, commit financial frauds, and damage reputations. In this context, the government’s move is seen as a proactive step toward making India’s digital space more secure and trustworthy by 2026.

Global Scenario of AI Regulation

It’s not just India — the whole world is taking Artificial Intelligence regulation seriously. To understand India’s approach, it helps to look at global developments.

| Country/Region | Law/Regulation | Current Status |

|---|---|---|

| European Union (EU) | EU AI Act | The world’s most comprehensive AI law is already in force. It imposes strict limits on high-risk AI systems. |

| China | Deep Synthesis Provisions | China has made it mandatory to tag all deepfake content as “synthetic.” |

| United States (USA) | Executive Orders | For now, companies follow voluntary commitments, but stricter legislation is in the works. |

| India | Digital India Act (Upcoming) | Through updates to IT Rules 2021 and the new draft, watermarking will become mandatory. |

India’s approach is hybrid — the focus is on encouraging innovation without compromising on safety. The MeitY draft aims to strike that balance.

How Will the Watermark Work?

Users are naturally curious — how exactly will this AI Content Watermarking system operate? And can someone remove it? Technically, it will work on two levels:

1. Visible Watermark

This will appear on-screen, similar to a TV channel logo, showing messages like “Generated by AI” or “Synthetic Media.” Its purpose is to alert viewers immediately.

2. Invisible Cryptographic Watermark

This is the core component. It’s not visible to the naked eye but can be detected by specialized software. Using standards like C2PA (Coalition for Content Provenance and Authenticity), a digital signature will be embedded within the file. Even if a video is cropped or edited, the watermark will remain intact.

Challenges and the Road Ahead

However, the road ahead is not entirely smooth. Open-source AI models pose a major challenge. Tools available on the dark web or unregulated platforms may not fall under Indian jurisdiction.

Additionally, hackers might develop watermark remover tools. The government will need to create a dynamic system that can evolve alongside technology. According to the draft, these regulations are expected to be fully implemented by the second quarter (Q2) of 2026.

AI is like a double-edged sword. If not controlled properly, it has the potential to distort our reality. In such a scenario, the government’s move is seen as a vital and timely effort to restore public trust in digital platforms.

Also Read

- CES 2026: No more parlors, change your nail polish color with an app! iPolish introduces a magical invention

- A year’s worth of work in just 60 minutes! This post by a Google engineer has caused a stir in the tech world.

- Is the distinction between real and fake over? Adam Mosseri issues a new decree regarding ‘AI Slop’!

- Elon Musk’s AI: Grok Free vs Paid Version – Should You Upgrade?